-

10 minutes

AI Policy Engines: How to Operationalize AI Governance for Financial Institutions

Read More ->: AI Policy Engines: How to Operationalize AI Governance for Financial InstitutionsEvaluation can show whether an AI system is performing acceptably. It cannot, by itself, decide what should happen next. AI policy engines fill that gap by translating governance logic into repeatable runtime decisions across agents, applications, and workflows.

-

12 minutes

How to Evaluate AI Agents: Building a Governance Framework

Read More ->: How to Evaluate AI Agents: Building a Governance FrameworkAI gateways control whether a model call is allowed to happen. Evaluation systems determine whether autonomous behavior remains acceptable after that access has been granted. In financial institutions, that means measuring performance continuously, defining thresholds explicitly, and inserting human review or restrictions before failure becomes systemic.

-

10 minutes

AI Gateways: The Control Plane for Model Access

Read More ->: AI Gateways: The Control Plane for Model AccessIdentity, lineage, and semantics make AI systems interpretable. They do not, by themselves, control model access. AI gateways are the enforcement layer that determines whether a model call is allowed to happen at all, which model path is permitted, and what runtime constraints apply.

-

10 minutes

Semantic Layers: The Hidden Infrastructure Behind Scalable AI

Read More ->: Semantic Layers: The Hidden Infrastructure Behind Scalable AILineage can show how a decision was made. It cannot guarantee that the data, features, rules, and policy terms behind that decision meant the same thing everywhere they were used. That is the role of the semantic layer: to make business definitions machine-readable, reusable, and governable so AI systems can operate correctly at scale.

-

8 minutes

Data Lineage as the Trust Backbone of AI Governance

Read More ->: Data Lineage as the Trust Backbone of AI GovernanceMost financial institutions say they have data lineage. What they usually have is a reconstruction layer: metadata inferred from logs, scheduler state, warehouse queries, notebook history, catalog scans, and pipeline definitions. That is useful for debugging. It is not enough for governance. That distinction matters more as AI moves deeper into regulated financial activity. When […]

-

7 minutes

Identity for AI Systems: The Glue That Holds AI Governance Together

Read More ->: Identity for AI Systems: The Glue That Holds AI Governance TogetherAI systems are starting to behave less like tools and more like participants in an operating environment. They retrieve data, apply transformations, and trigger downstream actions with increasing autonomy. As discussed in the shift toward machine-operational metadata, these systems are no longer just interacting with documentation, they are interacting with structured, executable context. Identity is what binds these systems together across data, decisions, and execution. In practical terms, identity in AI systems refers to cryptographically verifiable identifiers for agents, datasets, and transformations that enable traceability, accountability, and enforceable governance. The system can describe what exists, including datasets, pipelines, and agents,…

-

8 minutes

Why Governance is the Precondition for Scalable AI Agents

Read More ->: Why Governance is the Precondition for Scalable AI AgentsScalable AI agents are quickly moving from experimental tools to embedded components of enterprise infrastructure. In financial services, manufacturing, retail, and other regulated sectors, autonomous systems are beginning to interface directly with ledgers, operational databases, and reporting pipelines. As these systems evolve from conversational assistants into operational actors capable of invoking tools, modifying records, and influencing downstream decisions, their risk profile changes materially. As explored in our article on AI agents in data analytics, these systems can automate everything from data ingestion to predictive insights. Why Traceability Becomes a Governance Requirement At this stage, AI agent performance alone is no…

-

4 minutes

Automating a Risk Control Dashboard with Power BI MCP in Cursor for Free

Read More ->: Automating a Risk Control Dashboard with Power BI MCP in Cursor for FreeModern risk and control dashboards rarely fail because of visuals. They fail upstream, where definitions drift, calculations get re-implemented, and data governance lives in spreadsheets or people’s heads. In this walkthrough, I demonstrate how Power BI’s MCP (Model Context Protocol) can be used inside Cursor to automate much of that foundational work. MCP (Model Context […]

-

4 minutes

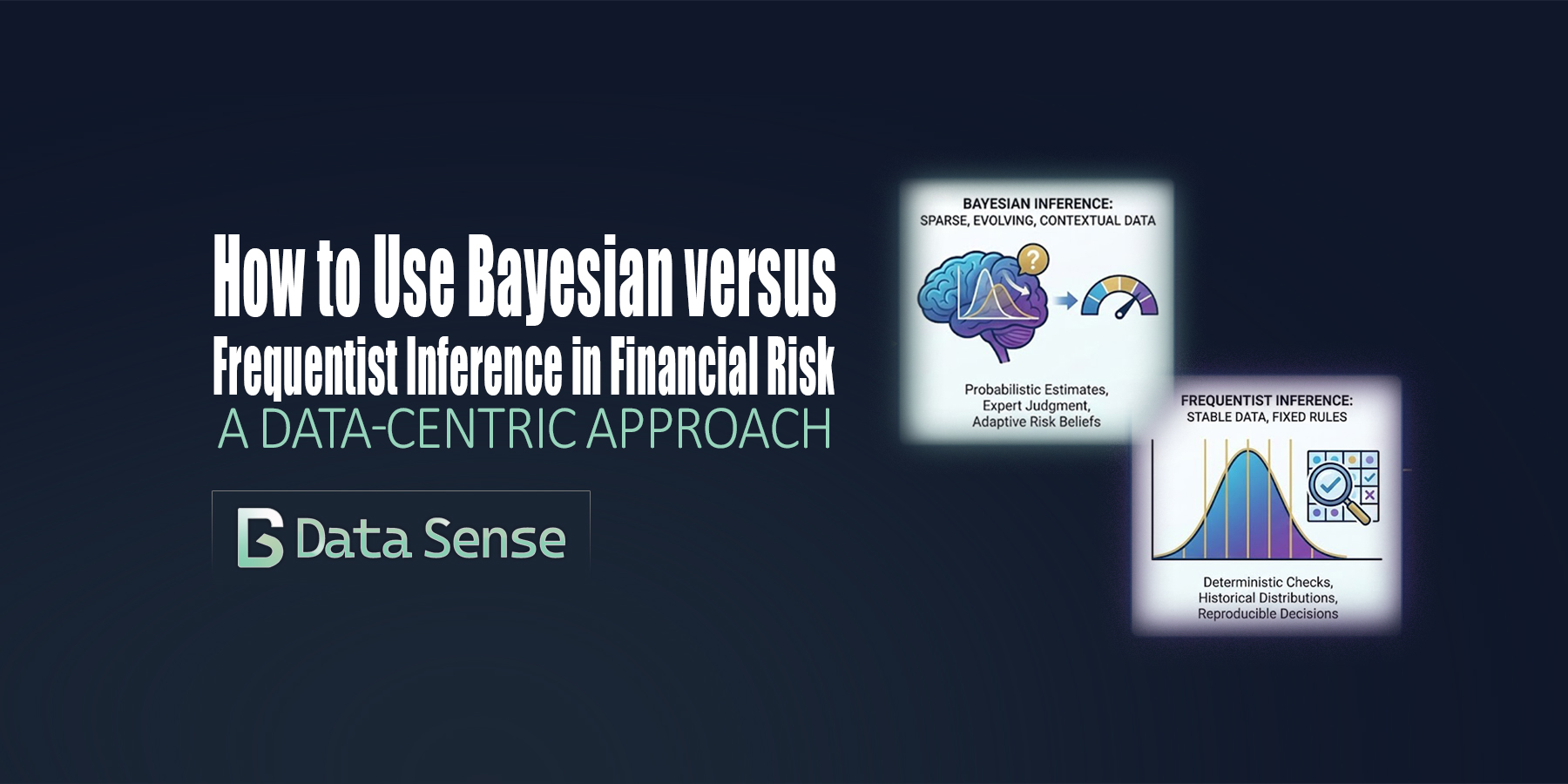

How To Use Bayesian versus Frequentist Inference

Read More ->: How To Use Bayesian versus Frequentist InferenceIn financial risk management, debates about Bayesian versus frequentist inference are often framed as methodological or philosophical. In practice, the choice is far more pragmatic: it is primarily a data problem. Model risk, drift, and operational risk live upstream of market, credit, and liquidity models. They are shaped less by elegant theory and more by the realities of data volume, stability, and interpretability. This is where the distinction between frequentist and Bayesian inference becomes operationally meaningful.

CATEGORY ARCHIVES