AI gateways are where AI governance stops being descriptive and starts becoming operational.

A financial institution can have strong identity, usable lineage, and a well-governed semantic layer and still fail at the point that matters most: model access.

If applications, agents, and workflow services can invoke models directly, choose model providers locally, and send prompts without a consistent enforcement layer, the architecture may be interpretable. It is not yet controlled.

That is why AI gateways matter.

They are often described as middleware for routing, authentication, and spend management. That is useful. It is not enough for governance.

In regulated financial environments, AI gateways are the control plane that determines whether a model call is allowed to happen at all, which model path is permissible, what controls have to be applied, and what evidence has to be emitted.

By this point in the series, the system is already well defined.

Identity establishes what exists and who is acting. Lineage establishes what can be trusted. Semantics establishes what things mean.

That makes the environment interpretable.

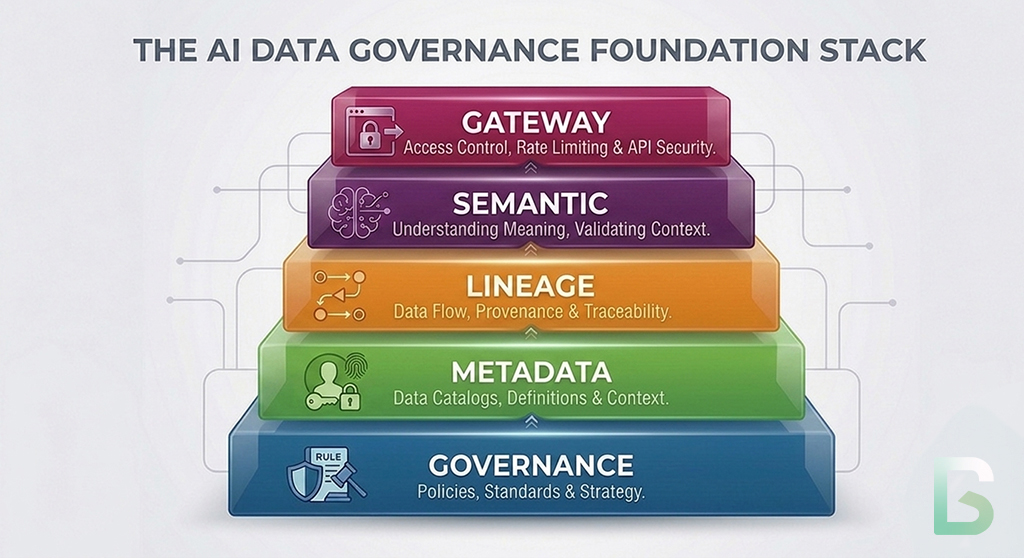

This is the next layer in the governance stack. Governance establishes authority. Metadata makes systems machine-operable. Identity resolves callers and artifacts. Lineage connects execution to evidence. Semantics stabilizes meaning. AI gateways determine whether a model call is allowed to happen at all, under which conditions, with which constraints, and through which approved path.

That is the shift from observation to control.

Without the gateway, the previous layers are largely observational.

With the gateway, they become enforceable.

Previously in the Series

This article builds directly on the earlier layers of the AI governance stack:

- Why Governance is the Precondition for Scalable AI Agents

- Metadata for AI Agents vs. Human Metadata

- Identity for AI Systems: The Glue That Holds AI Governance Together

- Data Lineage as the Backbone of AI Governance

- Semantic Layers: The Hidden Infrastructure Behind Scalable AI

Why The Semantic Layer Is Not The End

A system can be interpretable and still be operationally uncontrolled.

Article 5 showed why semantic layers matter: AI systems need machine-readable meaning, not just traceable pipelines.

That was necessary.

It was not the final control layer.

Even if a firm knows what a feature means, what a risk score represents, and what a policy term resolves to, that still does not answer a more immediate governance question: should this caller be allowed to invoke this model for this purpose right now?

That is not a semantics problem.

It is an access and enforcement problem.

Financial institutions feel this quickly because AI adoption tends to spread through direct integrations. Teams add model calls into chat interfaces, customer-service tools, fraud operations workflows, research assistants, credit decisioning utilities, developer tooling, and agent frameworks. Each implementation looks locally reasonable. Collectively, they create an uncontrolled model-access estate.

Why Direct Model Access Breaks Governance

Hardcoded model calls create fragmentation faster than governance can catch up.

Most organizations today still have some combination of:

- direct API calls to model providers

- hardcoded model usage inside applications

- fragmented controls across engineering, platform, and risk teams

- inconsistent handling of prompts, outputs, and fallback behavior

- limited visibility into which agents or services are actually invoking which models

That creates three governance failures.

Inconsistent Policy Enforcement

One application strips sensitive inputs before sending a prompt. Another does not. One team blocks certain use cases from higher-risk models. Another bypasses that control through a direct integration.

No Centralized Visibility

The institution may know that models are being used. It may not know which human users, services, or agents are calling them, for what purpose, under which approved path, and with what downstream actionability.

No Runtime Constraint On Agent Behavior

An agent can be semantically well-formed and lineage-aware and still be too powerful if it can call models freely, escalate to external providers, or switch to more capable models without explicit control.

That is why direct model access is not just a platform choice.

It is a governance failure mode.

The OWASP GenAI Security Project focused on identifying and mitigating security and safety risks in generative AI systems, including agentic applications. Those risks become harder to control when model access is fragmented across local integrations.

What An AI Gateway Actually Does

An AI gateway is not just an API proxy. It is the control plane for model access.

The common framing is too small.

An AI gateway does not merely pass requests through to a model endpoint. In a governed architecture, it becomes the layer that mediates model access across users, agents, applications, and infrastructure.

Its role is to decide whether a request may proceed, how it should proceed, which model path is permissible, what controls must be applied, and what evidence must be emitted.

That makes the gateway governance-critical infrastructure.

The NIST Zero Trust Architecture guidance is a useful analogy because it shifts control away from static perimeters and toward decisions centered on users, assets, and resources. AI gateways play a similar role for model access: they become the policy decision point for who may use which model path under which conditions.

The Capabilities That Matter

Good gateways govern model usage before, during, and after invocation.

Access Control

Model usage has to resolve back to identity.

That means the gateway should distinguish among human users, services, applications, agents, and delegated execution contexts. It should not only authenticate a token. It should know who or what is calling, on whose behalf, and under which approved role or use case.

Policy Enforcement

The gateway is where input and output constraints become executable.

That may include rules about which data classes may be sent to external models, which prompts require redaction, which outputs are allowed to trigger downstream action, when human review is mandatory, and when a request must be blocked entirely.

Model Routing

Different requests should not automatically go to the same model.

The gateway can route based on task type, latency tolerance, cost limits, jurisdiction, model approval status, or risk level. That allows institutions to separate experimentation from production, recommendation-only use from action-enabling use, and low-risk summarization from higher-risk decision support.

Cost And Rate Control

Uncontrolled access creates both financial waste and operational exposure.

The gateway should be able to enforce quotas, budget limits, concurrency constraints, and throttling policies across users, teams, applications, and agents. In finance, that is not only a platform concern. It is part of preventing misuse and ensuring controlled deployment.

Audit Logging That Is Lineage-Aware

A raw request log is not enough.

Every model call should become part of the execution evidence for the broader decision path. The gateway can emit structured events that connect caller identity, model selection, request context, policy evaluation, output handling, and downstream action references into the lineage graph.

At that point, the call is not just recorded.

It is governed as part of a traceable execution chain.

The OpenLineage standard is designed to record metadata for jobs in execution using consistent entities such as datasets, jobs, and runs.

The same design principle matters for gateway events: runtime evidence has to be emitted in a structured form if model invocation is going to become part of a governable lineage graph.

How The Gateway Closes The Loop

This is where the earlier layers become actionable control conditions.

The gateway is not independent of the rest of the architecture.

It is where the earlier layers are translated into an allow, deny, route, redact, escalate, or constrain decision.

Identity tells the gateway who is calling the model.

Lineage tells the gateway how the request and resulting output will be traced.

Semantics tells the gateway whether the inputs, outputs, and task framing are well-defined enough to use safely.

The gateway decides whether the call is allowed to happen at all.

This way, the gateway translates identity into access decisions.

It translates policy into enforced constraints.

It translates lineage into auditable execution.

Where Enforcement Actually Starts

Governance only becomes real when the system can stop an unapproved call.

Once a gateway sits in the execution path, it can support real control enforcement:

- block unapproved applications or agents from calling production models

- prevent prompts containing restricted data classes from being sent to external providers

- route higher-risk tasks to approved internal models or to human-review workflows instead of general-purpose endpoints

- cap spend and concurrency for experimental or non-production use cases

- restrict outputs so a model may summarize or recommend but not trigger direct action without further approval

This is the practical difference between governance as architecture and governance as runtime control.

Without the gateway, institutions mostly observe model usage after the fact.

With the gateway, they can constrain it before the call completes.

What Good Looks Like

The test is whether the institution can control model access centrally without losing operational flexibility.

A Customer-Service Agent Example

Consider a financial institution deploying customer-service agents across retail banking channels.

In a weak architecture, each channel team wires model access directly into its own application. One team uses an external frontier model for conversation handling. Another uses a smaller provider model for summarization. A third embeds direct model calls in an internal servicing desktop. Prompt restrictions differ by team. Output handling differs by team. Some flows let the model draft responses only. Others allow automated next-step recommendations without consistent approval logic. The institution may have observability dashboards, but it does not have centralized control.

Identity may tell the institution which service agent called the model.

Lineage may capture how the response entered the workflow.

Semantics may define what account, dispute, escalation, or resolution terms mean.

That still does not mean the institution has governed model access.

In a stronger architecture, all model calls pass through a gateway. The gateway resolves whether the caller is a human service representative tool, a customer-facing agent, or an internal automation service. It evaluates whether customer data in the prompt is permitted for the destination model, whether the task type is allowed for that model class, whether the requested action is recommendation-only or action-enabling, and whether the output requires human review before release. It then routes the request to an approved model path, applies the required constraints, emits lineage-aware evidence, and blocks anything outside policy.

At that point, the institution is not just consuming models.

It is governing model access.

The Real Standard

You cannot govern what you cannot control.

AI gateways matter because they change governance from interpretation into execution.

They are not optional infrastructure for routing and cost optimization. They are the mechanism by which institutions apply governance in real time.

That is the standard that matters in financial services.

It supports model risk management, operational control, cost discipline, vendor governance, incident response, and regulator engagement.

An organization that understands its identities, traces its lineage, and standardizes its semantics still does not have governed AI if model access remains unconstrained.

It has a well-described environment with a weak control plane.

AI gateways are where governance becomes execution.

What Comes Next

Access control is necessary, but it does not tell you whether autonomous behavior is acceptable.

Once institutions can control how models are accessed, the next question changes.

It is no longer only whether the system was allowed to act.

It is whether the agent or model-driven workflow is performing acceptably over time.

That is where evaluation systems come in.

Identity establishes who is acting.

Lineage establishes what can be trusted.

Semantics establishes what things mean.

Gateways establish what is allowed to happen.

Evaluation determines whether autonomous behavior remains within acceptable bounds.

The next article will move from access control to performance governance: how institutions evaluate agents continuously, define acceptable behavior, and trigger oversight when autonomous systems stop meeting the standard required for regulated operations.

Series: The Architecture of Governed AI Systems

- Governance is the Precondition for Scalable AI Agents

- Metadata for AI Agents vs Human Metadata

- Identity for AI Systems: The Missing Layer of AI Governance

- Data Lineage as the Trust Backbone of AI Systems

- Semantic Layers: The Hidden Infrastructure Behind Scalable AI

- AI Gateways: The Control Plane for Model Access

- How to Evaluate AI Agents: Building a Governance Framework

- AI Policy Engines: How to Operationalize AI Governance for Financial Institutions

- Institutional Traceability: The Operating System of AI Governance (coming next)

Follow the Series

We are continuing to explore the architecture required for governed AI systems. Upcoming articles will cover identity infrastructure, policy engines, and AI control planes.

Subscribe to the Data Sense newsletter to receive updates when the next article is published.