AI policy engines are where AI governance stops being guidance and starts becoming repeatable decision logic.

A financial institution can have clear identity, traceable lineage, stable semantics, controlled model access, and even strong evaluation systems and still fail at the next point that matters: turning governance requirements into consistent operational decisions.

If thresholds, exceptions, approvals, restrictions, and override rules live in PDFs, committee notes, application code, and team-specific playbooks, the architecture may be governed in principle. It is not yet executable in practice.

That is why policy engines matter.

They are often described as authorization infrastructure or policy-as-code tooling. But is it enough for good governance?

In regulated financial environments, policy engines are the layer that translates governance logic into executable decisions: what an agent, model, user, or workflow is allowed to do next, under which conditions, with which approvals, and with what evidence.

By this point in the series, the institution can already measure autonomous behavior.

Identity establishes what exists and who is acting. Lineage establishes what can be trusted. Semantics establishes what things mean. Gateways establish what model access is allowed. Evaluation establishes whether behavior remains acceptable.

That makes the environment observable, controllable, and accountable.

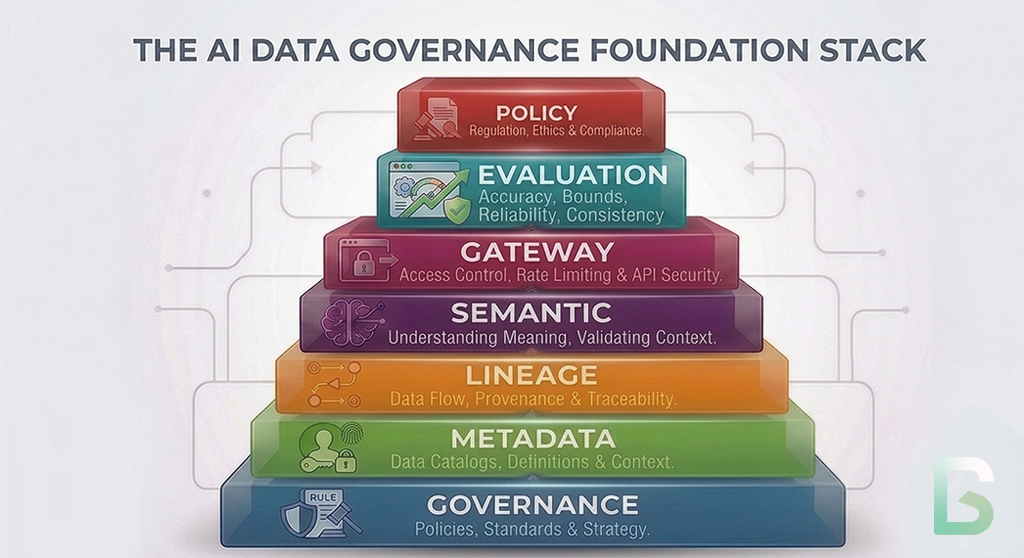

This is the next layer in the governance stack. Governance establishes authority. Metadata makes systems machine-operable. Identity resolves actors and artifacts. Lineage connects execution to evidence. Semantics stabilizes meaning. Gateways control model access. Evaluation measures whether behavior remains acceptable. Policy engines determine how governance decisions are executed consistently at scale.

That is the shift from oversight to decision automation.

Without policy engines, the earlier layers still depend on local interpretation.

With policy engines, governance logic becomes executable.

Previously in the Series

This article builds directly on the earlier layers of the AI governance stack:

- Why Governance is the Precondition for Scalable AI Agents

- Metadata for AI Agents vs. Human Metadata

- Identity for AI Systems: The Glue That Holds AI Governance Together

- Data Lineage as the Backbone of AI Governance

- Semantic Layers: The Hidden Infrastructure Behind Scalable AI

- AI Gateways: The Control Plane for Model Access

- Agent Evaluation Systems: The Governance Layer for Autonomous AI

Why Evaluation Is Not Enough

Knowing that a system is weak does not decide what happens next.

Evaluation tells an institution whether autonomous behavior remains acceptable.

It does not, by itself, determine how the institution should respond.

If an agent’s quality score falls below threshold, does autonomy get reduced? If a model call involves a restricted data class, is the request blocked, routed internally, or sent to human review? If one jurisdiction allows a workflow and another does not, where is that logic expressed and enforced?

Those are policy execution questions.

They sit downstream of measurement.

That is why policy engines follow evaluation in the governance stack.

Evaluation produces risk signals and evidence.

Policy engines determine what the system is allowed to do with them.

Why Human-Only Governance Breaks At Scale

Manual governance does not scale across agentic systems.

Most institutions start with some mixture of:

- approval logic embedded directly in application code

- workflow restrictions documented in policy manuals rather than executable rules

- human reviewers making case-by-case decisions without a central decision layer

- duplicated rules across channels, products, and teams

- ad hoc override paths with uneven documentation

That creates four governance failures.

Inconsistent Decision Logic

One team blocks action-enabling outputs below a confidence threshold. Another allows them with informal review. A third encodes a similar rule differently inside a workflow tool.

Slow Response To Policy Change

When risk thresholds, regulatory requirements, or internal standards change, updates have to be coordinated across multiple systems and teams. That creates lag between policy intent and runtime behavior.

Weak Auditability Of Why A Decision Was Made

The institution may know what happened. It may not be able to show which rule, exception, threshold, or approval path caused the system to permit, deny, escalate, or constrain the action.

Brittle Governance Embedded In Application Logic

When governance logic is buried inside applications, prompts, orchestration scripts, and agent harnesses, testing and change control become harder. A business-rule change becomes a software release problem.

That is not a policy-documentation problem.

It is a decision-automation problem.

What An AI Policy Engine Actually Does

A policy engine is not just an authorization check. It is the execution layer for governance logic.

The common framing is too small.

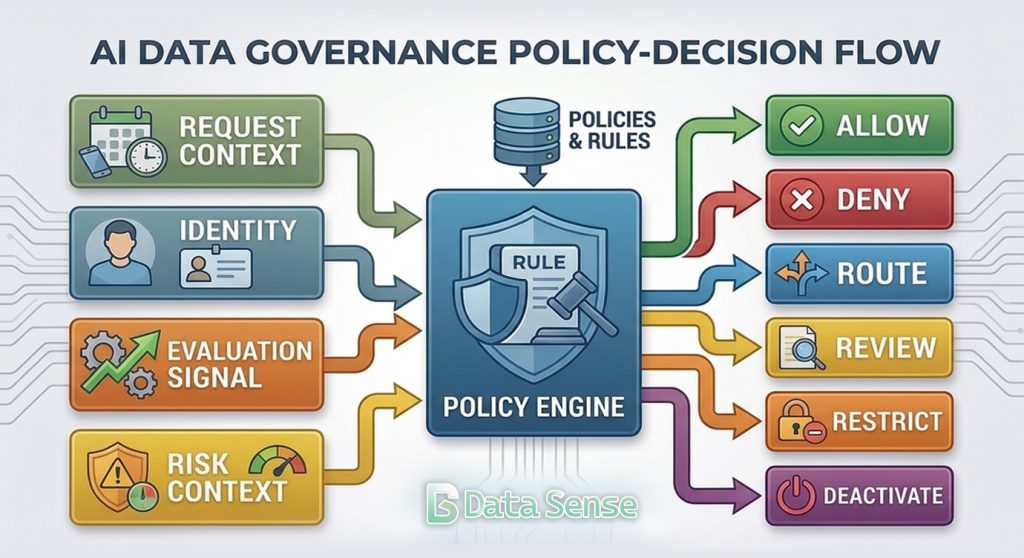

An AI policy engine does not merely answer a narrow access-control question. In a governed architecture, it evaluates structured inputs about identity, action type, resource, context, risk, and approval state to determine what the system is permitted to do next.

Its role is to convert governance logic into repeatable outcomes such as allow, deny, route, downgrade, require approval, require review, or deactivate.

That makes the policy engine governance-critical infrastructure.

The NIST AI Risk Management Framework distinguishes between measuring risk and managing it. Measurement outcomes are meant to inform response decisions. Policy engines are one of the practical mechanisms by which that transition happens in production.

Further, the NIST Zero Trust Architecture guidance separates policy decision from policy enforcement. AI policy engines serve a similar architectural role: they externalize decision logic so governance is not left to scattered application code.

Implementation guidance from Open Policy Agent and Cedar points in the same direction. Both emphasize separating policy decision logic from application code. That architectural principle matters for AI governance because policy should be centrally testable, versioned, reviewable, and updateable without rewriting every workflow that depends on it.

The Capabilities That Matter

Good policy engines turn governance requirements into structured, testable, and explainable decisions.

Structured Inputs For Decisioning

The engine needs machine-readable inputs.

That includes who is acting, what action is being attempted, what resource or model path is involved, what risk or evaluation signals apply, what jurisdiction or business context applies, and what approvals or exceptions already exist.

Decisions Beyond Allow Or Deny

In AI systems, a useful decision is often more than a binary answer.

The engine may need to return structured outcomes such as recommendation-only, human-review-required, internal-model-only, external-provider-blocked, or escalate-to-second-line.

Versioned Policy-As-Code

Governance logic should be maintained as a governed artifact, not as hidden conditional logic spread across applications.

That makes policy reviewable, testable, diffable, and change-controlled.

Exception And Override Handling

Institutions need a formal way to handle approved exceptions.

The policy engine should be able to incorporate temporary overrides, delegated approvals, expiry conditions, and explicit exception records without destroying the consistency of the baseline rules.

Evaluation-Aware Decisions

Policy should be able to consume the outputs of the evaluation layer.

If performance drops below threshold, if the workflow leaves its approved scope, or if a new risk signal appears, the policy engine should be able to constrain behavior automatically.

Explainability And Evidence

A good engine does not just return an outcome.

It also makes it possible to show which rule, threshold, context, and exception path led to that decision.

Cross-System Consistency

The same institution should not make different governance decisions merely because the request came through a different interface, product team, or orchestration layer.

Policy engines matter because they centralize decision logic while allowing different systems to consume it consistently.

How The Policy Engine Closes The Loop

This is where governance requirements become executable decision paths.

The policy engine is not independent of the rest of the architecture.

Identity tells the engine who or what is acting.

Lineage tells the engine what evidence, dependencies, and downstream consequences can be traced.

Semantics tells the engine whether the terms, task type, and thresholds are defined consistently.

Gateways tell the engine what model access is technically possible.

Evaluation tells the engine whether behavior remains acceptable.

The policy engine determines what the system is allowed to do next.

That is the conceptual shift that matters.

Policy engines translate governance policy into runtime logic.

They translate thresholds into actions.

They translate exceptions into controlled decisions.

Where Governance Actually Becomes Repeatable

Governance is not repeatable until the same inputs produce the same decision path.

Once a policy engine sits in the operating path, it can support real decision automation:

- downgrade an agent from action-enabling to recommendation-only when evaluation scores deteriorate

- block the use of an external provider for prompts containing a restricted data class

- require second-line approval before a high-impact workflow may act on a model output

- apply different restrictions by product, geography, business line, or regulatory context

- deactivate or supersede a workflow when performance or outcomes move outside approved bounds

This is the practical difference between governance that is written down and governance that is executable.

Without a policy engine, institutions rely on documents, meetings, and fragmented application logic to interpret what should happen.

With a policy engine, they can encode those decisions directly into the operating model.

What Good Looks Like

The test is whether the institution can update governance logic centrally and have runtime behavior change consistently across the estate.

A Retail Servicing Example

Consider a servicing environment where agents help representatives draft responses, recommend hardship options, and prepare account actions for review.

In a weak architecture, each application team hardcodes its own approval logic. One workflow permits recommendation-only use for certain cases. Another allows automated next-step suggestions based on a local threshold. A third requires supervisor approval, but only in one channel. If a risk standard changes, each team has to update its own code and retrain its own operators. The institution may have governance documents, but it does not have one decision layer.

Identity may tell the institution who initiated the request.

Lineage may show which data and model outputs were used.

Semantics may define what hardship, complaint, or override means.

Gateways may control model access.

Evaluation may show whether the workflow is performing acceptably.

In a stronger architecture, all runtime decisions about what the workflow may do next are resolved through a policy engine. The engine evaluates the caller, task type, product line, jurisdiction, evaluation status, approval state, and output actionability. It can then allow recommendation-only use, require supervisor approval, block direct action, route to a human-review queue, or disable the workflow entirely if conditions fall outside policy.

The Real Standard

You cannot automate governance with documents alone.

AI policy engines matter because they turn governance from written requirements into repeatable operational decisions.

They are not optional implementation detail. They are the mechanism by which institutions encode restrictions, thresholds, approvals, exception paths, and deactivation logic into runtime behavior.

That is the standard that matters in financial services.

It supports operational accountability, model risk management, change control, control testing, incident response, and regulator engagement.

An organization that measures autonomous behavior still does not have governed AI if the response to that measurement depends on local interpretation, manual routing, or duplicated application logic. It has oversight with weak execution.

AI policy engines are where governance becomes repeatable decisioning.

What Comes Next

Decision automation is necessary, but institutions still need a way to reconstruct the full operating history of what happened, why it happened, and under which controls.

Once institutions can execute governance decisions consistently, the next question changes.

It is no longer only whether the system was allowed to act.

It is how the institution reconstructs decisions, evidence, overrides, controls, and outcomes across the entire estate.

That is where institutional traceability comes in.

Identity establishes who is acting.

Lineage establishes what can be trusted.

Semantics establishes what things mean.

Gateways establish what is allowed to be accessed.

Evaluation establishes whether behavior remains acceptable.

Policy engines establish how governance decisions are executed.

Institutional traceability connects all of those decisions into a durable operating record.

The next article will move from decision automation to traceability architecture: how institutions build a full operating system of record for AI decisions, controls, and accountability.

Series: The Architecture of Governed AI Systems

- Governance is the Precondition for Scalable AI Agents

- Metadata for AI Agents vs Human Metadata

- Identity for AI Systems: The Missing Layer of AI Governance

- Data Lineage as the Trust Backbone of AI Systems

- Semantic Layers: The Hidden Infrastructure Behind Scalable AI

- AI Gateways: The Control Plane for Model Access

- How to Evaluate AI Agents: Building a Governance Framework

- AI Policy Engines: How to Operationalize AI Governance for Financial Institutions

- Institutional Traceability: The Operating System of AI Governance (coming next)

Follow the Series

We are continuing to explore the architecture required for governed AI systems. Upcoming articles will cover identity infrastructure, policy engines, and AI control planes.

Subscribe to the Data Sense newsletter to receive updates when the next article is published.