Most financial institutions say they have data lineage.

What they usually have is a reconstruction layer: metadata inferred from logs, scheduler state, warehouse queries, notebook history, catalog scans, and pipeline definitions. That is useful for debugging. It is not enough for governance.

That distinction matters more as AI moves deeper into regulated financial activity. When models and agents influence credit decisions, fraud controls, transaction monitoring, customer servicing, trading workflows, or risk operations, lineage stops being a reporting convenience. It becomes part of the control environment.

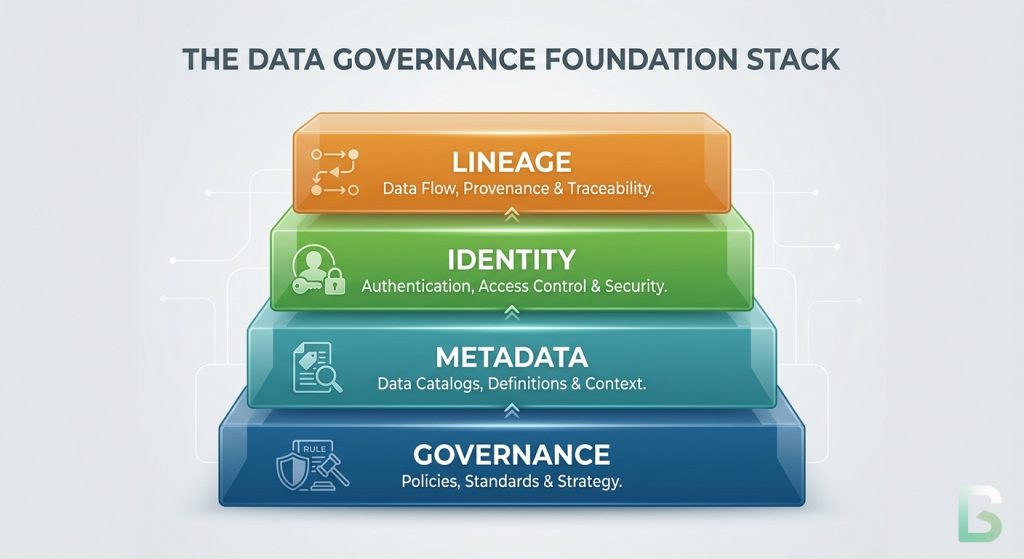

This is the next layer in the governance stack. Governance establishes the need for control. Metadata makes systems machine-operable. Identity makes data, models, and actors verifiable. Lineage connects those layers into a trust graph that can explain how a decision was produced and whether it should have been allowed to happen.

In financial services, that is the real test. The question is not whether a team can draw a dependency diagram after an incident. The question is whether the institution can produce a reliable chain of evidence showing what data, model, policy logic, and execution context produced a specific outcome.

If it cannot, then it does not have governed AI. It has opaque automation with better observability.

Previously in the Series

This article builds directly on the earlier layers of the AI governance stack:

- Why Governance is the Precondition for Scalable AI Agents

- Metadata for AI Agents vs. Human Metadata

- Identity for AI Systems: The Glue That Holds AI Governance Together

Why Reconstructed Lineage Falls Short

Reconstruction helps with debugging. It does not satisfy governance.

Traditional lineage programs were built for analytics estates, not for governed decision systems.

Incomplete Logging Surfaces

They assume the logging surface is complete. It is not. Real financial platforms span warehouses, streaming systems, feature pipelines, model registries, model serving layers, APIs, workflow engines, case management tools, and external data providers.

Non-Deterministic Execution

They assume the system is deterministic enough to replay. It often is not. Feature pipelines run asynchronously. Data is corrected late. Models are retrained. Thresholds change. External inputs drift. By the time someone investigates, the exact state that existed at decision time may already be gone.

Correlation Is Not Evidence

They assume correlation is enough. It is not. A log showing that a model ran near the time a customer was declined does not prove which feature state, model version, decision policy, or override path actually produced that result.

That is the boundary between observability and governance.

The W3C PROV overview is useful here because it frames provenance around entities, activities, and agents involved in producing an outcome so that reliability and trustworthiness can be assessed. That is a higher standard than inferring that systems were probably connected.

Why Finance Needs More Than Technical Traceability

In regulated environments, lineage has to explain decisions, not just data flows.

In finance, lineage is not only about tracing data. It is about tracing decisions.

That matters because the outputs are often consequential. A model score may influence whether a payment is flagged, a transaction is blocked, a customer is escalated, a trade is reviewed, or an account is subject to enhanced monitoring. Even where AI is not making the final decision, it may still shape the path a human follows.

That creates three governance requirements.

- Firms need to know where the underlying data came from, how it was transformed, and whether it met quality expectations.

- They need to know which model, prompt, rule set, or policy logic was active at the moment of decision.

- They need to show that the output entered downstream processes through an approved path rather than through undocumented transformation or manual workaround.

These are not separate disciplines. They are different views of the same control problem.

From Lineage to Provenance

Identity turns a dependency map into accountable evidence.

Identity Comes First

The shift starts with identity.

Once a firm can assign durable identifiers to the things that matter, it can stop treating lineage as a guessed dependency map and start treating it as provenance.

A governed AI environment needs stable identities for four classes of objects:

- data entities

- transformation activities

- responsible agents

- resulting artifacts

In W3C PROV-DM, these map to Entity, Activity, and Agent, plus relations such as Used, WasGeneratedBy, WasDerivedFrom, WasAssociatedWith, and WasAttributedTo. That is much closer to what a financial control framework needs than a simple view of job A feeding table B.

What Provenance Has to Capture

Operationally, every material transformation should answer four questions:

- Which exact inputs were used?

- Which activity transformed them?

- Which outputs were generated?

- Which agent, service, or accountable function was responsible?

Those answers need versioned identifiers, timestamps, and execution context. That is why the OpenLineage object model matters in practice. It distinguishes between a job, a run, and a dataset, and it separates runtime evidence from design-time metadata.

Without identity, lineage is reconstruction.

With identity and runtime-emitted claims, lineage becomes provenance.

The Four Layers That Matter

Data, features, models, and decisions have to resolve into one connected graph.

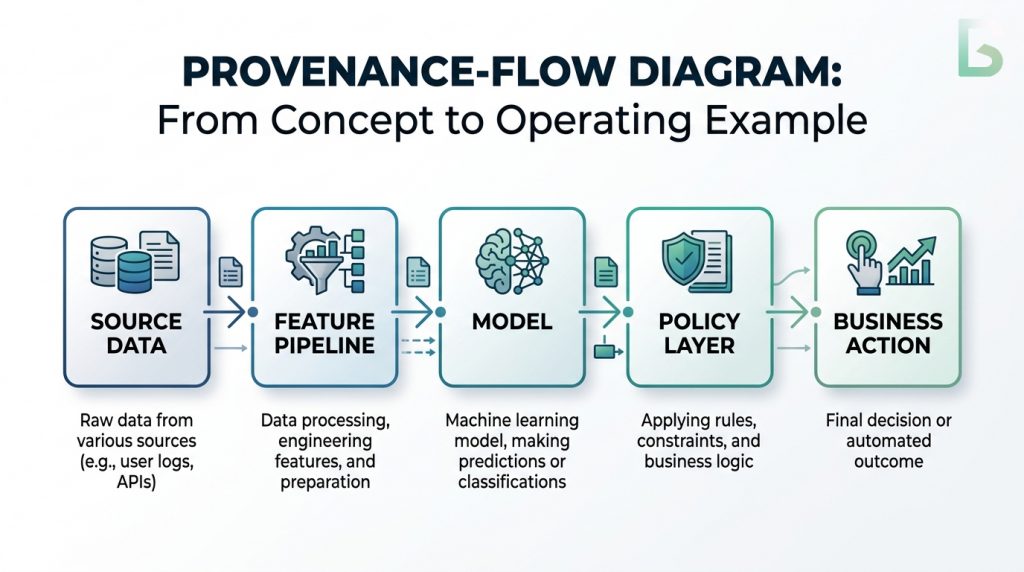

For financial-sector AI, lineage is not one generic graph. It has four connected layers.

Data Lineage

Data lineage records how raw and intermediate datasets move through ingestion, normalization, enrichment, and storage.

Feature Lineage

Feature lineage records how source data becomes model-ready input, including point-in-time joins, aggregation windows, freshness constraints, and quality assertions.

Model Lineage

Model lineage records how a model artifact was produced and promoted, including its training data, code revision, evaluation outputs, approval state, and deployment target.

Decision Lineage

Decision lineage records how an inference became an operational outcome, connecting the request, feature state, model version, policy logic, human intervention, and final action.

Most firms spread these layers across different teams and tools. That may be operationally convenient, but it is governance-wise fragile.

A trustworthy control environment requires them to compose into a single graph.

What Good Looks Like

The test is whether a firm can trace a business outcome back to source data and control logic.

A Credit Decisioning Example

Consider a credit decisioning flow. Customer data is ingested and normalized. A feature pipeline computes affordability and exposure measures. A model scores the application. A policy layer applies thresholds, overrides, and business rules. The final outcome is written into a workflow.

If the institution later needs to explain that decision, a useful lineage record cannot stop at the model artifact. It needs to show which source datasets fed the feature set, which transformation run produced the features used for that customer, which model version produced the score, which policy version converted the score into an action, and whether an override path was involved.

The same pattern applies in fraud, transaction monitoring, and trading environments. The specifics change. The control requirement does not.

Where Enforcement Actually Starts

Once lineage sits in the execution path, it can support real enforcement:

- block execution when an input dataset version is unknown

- prevent feature use when quality assertions fail

- block model promotion when training provenance is incomplete

- prevent downstream actioning when the decision record lacks model, feature, or policy references

At that point, lineage stops being a catalog feature. It becomes part of how the institution governs execution.

The Real Standard

Auditability starts when the system can prove what happened.

From Reconstruction to Evidence

When provenance is emitted at runtime and connected across the full decision path, auditability becomes a system property rather than a reporting exercise.

The institution is no longer asking, “What do we think happened?”

It is asking, “What can we prove happened, and do we have the control evidence to defend it?”

That is the standard that matters in financial services. It supports internal audit, model validation, compliance review, incident investigation, and regulator engagement.

A reconstructed graph may help with debugging.

A verifiable provenance graph helps an institution defend the integrity of its decisions.

That is the difference between observability and governance.

If you cannot trace it, you cannot trust it.

And if you cannot trust it, you cannot govern it.

What Comes Next

Traceability is necessary, but it is not the final layer.

Semantic Consistency

Once a system can trace transformations reliably, the next constraint is semantic consistency.

A graph can tell you that dataset X fed feature set Y and that model Z produced decision Q. It does not tell you whether “customer,” “active account,” “risk score,” or “approved” mean the same thing across teams and systems.

That is the next layer of governance architecture: semantic standardization.

Lineage makes systems traceable.

A semantic layer makes them interpretable.

Without provenance, AI systems are opaque.

Without semantics, they are traceable but still hard to reason about.

That is where mature AI governance has to go next.

The next article will move from traceability to meaning: how institutions create a semantic layer that standardizes definitions, makes policy terms machine-interpretable, and ensures the same business concepts hold across data, models, controls, and reporting.

Series: The Architecture of Governed AI Systems

- Governance is the Precondition for Scalable AI Agents

- Metadata for AI Agents vs Human Metadata

- Identity for AI Systems: The Missing Layer of AI Governance

- Data Lineage as the Trust Backbone of AI Systems

- Semantic Layers: The Hidden Infrastructure Behind Scalable AI (coming next)

Follow the Series

We are continuing to explore the architecture required for governed AI systems. Upcoming articles will cover identity infrastructure, policy engines, and AI control planes.

Subscribe to the Data Sense newsletter to receive updates when the next article is published.