AI systems are starting to behave less like tools and more like participants in an operating environment. They retrieve data, apply transformations, and trigger downstream actions with increasing autonomy. As discussed in the shift toward machine-operational metadata, these systems are no longer just interacting with documentation. They are interacting with structured, executable context.

Identity is what binds these systems together across data, decisions, and execution.

In practical terms, identity for AI systems refers to cryptographically verifiable identifiers for agents, datasets, and transformations that enable traceability, accountability, and enforceable governance.

The system can describe what exists, including datasets, pipelines, and agents, but it cannot reliably establish who is acting, what is being acted on, and how a result was produced in a way that can be verified.

Most governance efforts are currently falling short in enterprise AI implementations.

Lineage exists, but it is often reconstructed from logs rather than derived from verifiable relationships. Policies exist, but they are enforced inconsistently because the system lacks stable identifiers to evaluate against. Audit trails exist, but they describe activity without proving it.

Identity, in this context, goes beyond naming components. It is about making them uniquely identifiable, verifiable, and accountable at runtime. It is what turns metadata from descriptive context into something the system can rely on when making decisions.

Identity as the Binding Layer

Modern data systems are built on references.

We reference:

- tables by name

- pipelines by ID

- services by account

But references are not guarantees. They point to something, but they do not prove anything about it.

A table name does not guarantee the contents of the table.

A pipeline ID does not guarantee the logic that executed.

A service account does not capture the specific authority under which an action occurred.

What’s missing is a way to bind identity directly to:

- the contents of data

- the logic of transformations

- the authority of the actor

This is the difference between a system that describes itself and one that can be verified.

Three Domains of Identity for AI Systems

To make governance operational, identity needs to exist across three distinct but connected domains.

Agent Identity

An AI agent is not just a process, it is an execution context operating under delegated authority.

For identity to be meaningful at this layer, it must carry:

- a persistent identifier independent of environment

- a clear delegation chain (who authorized this agent)

- scoped permissions tied to that identity

This allows decisions to be evaluated against the specific agent instance, not a generic role or label.

Dataset Identity

Data identity needs to move beyond location-based references.

Instead of pointing to where data lives, identity should reflect:

- the exact contents of the dataset (e.g., hash-based binding)

- its version over time (immutable states)

- its origin and ownership

This creates a stable reference point. When a system uses a dataset, it can verify that the data matches what was intended and not just assume it based on a path or table name.

Transformation Identity

This is where most real-world systems lose fidelity.

Transformations such as queries, pipelines, and model inferences, shape outcomes but are rarely well documented. To make them governable, each transformation needs to carry its own identity, including:

- a reference to the logic executed (code or model version)

- the identities of its inputs and outputs

- the execution context (agent, time, environment)

When this is in place, lineage stops being a narrative and becomes a set of verifiable relationships.

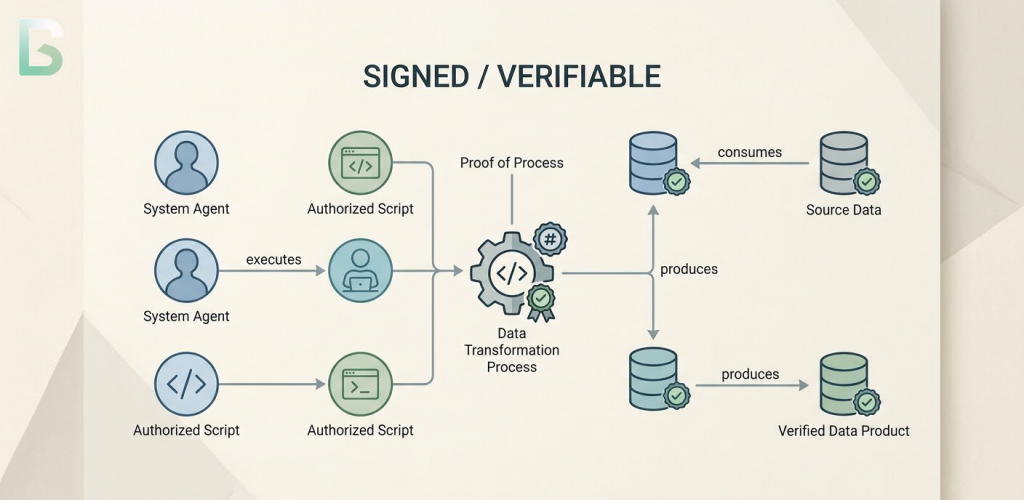

From Lineage to Verifiable Provenance

Once identities are consistently applied, lineage changes in a fundamental way.

Instead of stitching together logs to answer what likely happened, the system can assert what provably happened.

Each step in a process becomes a claim:

- a specific agent executed a transformation

- that transformation consumed specific datasets

- it produced a specific output

And critically, those claims can be checked.

This is what turns lineage into provenance, not just a record of events, but a structure that can be validated independently of the system that produced it.

Why Cryptographic Identity Embeds Verifiable Trust

Traditional metadata systems rely on trust in the platform maintaining them. Cryptographic identity shifts that trust into something verifiable.

It enables:

- content-bound identifiers (data is what its hash says it is)

- signed assertions (actions can be attributed and verified)

- tamper-evident relationships (changes break the chain of trust)

This doesn’t eliminate the need for governance systems, but it gives them something solid to operate on.

How KERI Helps with Verification

KERI (Key Event Receipt Infrastructure) is decentralized framework for managing self-certified digital identifiers without a central ledger or authority.

KERI introduces a model of self-certifying identifiers that are:

- derived from cryptographic keys

- portable across systems

- capable of maintaining continuity over time

For AI systems, this allows agents and processes to exist as persistent, verifiable entities, rather than environment-bound constructs.

More importantly, KERI’s event-based model aligns naturally with how AI systems operate.

Each action including data access, transformation, and output generation, can be treated as a signed event, contributing to a verifiable history rather than a mutable log.

The vLEI for Verifiable Organizational Identity

vLEI extends identity beyond systems into institutions.

It allows organizations to issue verifiable credentials that can be used to:

- bind agents to legal entities

- delegate authority in a traceable way

- attribute actions to accountable organizations

This becomes essential when AI systems operate across boundaries—between departments, companies, or jurisdictions—where trust cannot rely on shared infrastructure alone.

Alignment with National Institute of Standards and Technology

Frameworks like NIST’s AI Risk Management Framework emphasize:

- traceability

- accountability

- auditability

These are often implemented as processes layered on top of systems. Identity shifts them into the system itself.

- Traceability becomes the ability to follow identity-linked relationships

- Accountability becomes the ability to bind actions to agents and organizations

- Auditability becomes the ability to verify those bindings independently

With identity in place, these principles become operational at the system level.

What This Enables in Practice

Once identity is embedded at this level, several things become possible that are otherwise fragile:

- policies can be evaluated against the exact inputs and actors involved in a decision

- data integrity can be validated at the moment of use, not assumed

- transformations can be reproduced and verified independently

- cross-system interactions can carry trust without relying on shared control planes

This is where governance moves from interpretation to enforcement.

Identity as the Foundation of Trust

Every layer in the AI governance stack—metadata, lineage, policy, evaluation—assumes that the system can reliably identify what it is operating on.

Without that, each layer degrades in subtle ways. Metadata describes but cannot be enforced. Policies define intent but cannot be applied deterministically. Audit trails capture activity but cannot be verified independently.

Identity changes that dynamic. It anchors data to its contents, transformations to their logic, and agents to their authority. It gives systems the ability to validate what they are operating on at the moment of execution, rather than relying on assumptions carried over from design time.

But identity alone is not sufficient.

Once identities exist, they need to be connected. Systems need to understand not just what something is, but how it relates to everything else, how data flows, how transformations build on one another, and how decisions are ultimately constructed.

This is where the next layer emerges.

In the next article, we’ll explore how these identities form the backbone of something more powerful: a lineage graph that can be trusted—not as documentation, but as the system of record for how AI decisions are made.

Series: The Architecture of Governed AI Systems

- Governance is the Precondition for Scalable AI Agents

- Metadata for AI Agents vs Human Metadata

- Identity for AI Systems: The Missing Layer of AI Governance

- Data Lineage as the Trust Backbone of AI Systems

- Semantic Layers: The Hidden Infrastructure Behind Scalable AI (coming next)

Follow the Series

We are continuing to explore the architecture required for governed AI systems. Upcoming articles will cover identity infrastructure, policy engines, and AI control planes.

Subscribe to the Data Sense newsletter to receive updates when the next article is published.